Fashion Research

Fashion plays a fundamental role in everyday lives and conveys a large amount of non-verbal information. However, dealing with fashion is a very complicated task due to the large amount of subjectiveness which makes it near-impossible to annotate large sets of data. The research here tackles these issues in order to make sense out of the vast amounts of fashion data on the web.

-

Fashion Style in 128 Floats

In this work we present an approach for learning features from large amounts of weakly-labelled data. Our approach consists training a convolutional neural network with both a ranking and classification loss jointly. We do this by exploiting user-provided metadata of images on the web. We define a rough concept of similarity between images using this metadata, which allows us to define a ranking loss using this similarity. Combining this ranking loss with a standard classification loss, we are able to learn a compact 128 float representation of fashion style using only noisy user provided tags that outperforms standard features. Furthermore, qualitative analysis shows that our model is able to automatically learn nuances in style.

-

Neuroaesthetics in Fashion

Being able to understand and model fashion can have a great impact in everyday life. From choosing your outfit in the morning to picking your best picture for your social network profile, we make fashion decisions on a daily basis that can have impact on our lives. As not everyone has access to a fashion expert to give advice on the current trends and what picture looks best, we have been working on developing systems that are able to automatically learn about fashion and provide useful recommendations to users. In this work we focus on building models that are able to discover and understand fashion. For this purpose we have created the Fashion144k dataset, consisting of 144,169 user posts with images and their associated metadata. We exploit the votes given to each post by different users to obtain measure of fashionability, that is, how fashionable the user and their outfit is in the image. We propose the challenging task of identifying the fashionability of the posts and present an approach that by combining many different sources of information, is not only able to predict fashionability, but it is also able to give fashion advice to the users.

-

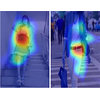

Clothes Segmentation

In this research we focus on the semantic segmentation of clothings from still images. This is a very complex task due to the large number of classes where intra-class variability can be larger than inter-class variability. We propose a Conditional Random Field (CRF) model that is able to leverage many different image features to obtain state-of-the-art performance on the challenging Fashionista dataset.

Publications

@InProceedings{KikuchiICCVW2019,

author = {Kotaro Kikuchi and Kota Yamaguchi and Edgar Simo-Serra and Tetsunori Kobayashi},

title = {{Regularized Adversarial Training for Single-shot Virtual Try-On}},

booktitle = "Proceedings of the International Conference on Computer Vision Workshops (ICCVW)",

year = 2019,

}

@InProceedings{TakagiICCVW2017,

author = {Moeko Takagi and Edgar Simo-Serra and Satoshi Iizuka and Hiroshi Ishikawa},

title = {{What Makes a Style: Experimental Analysis of Fashion Prediction}},

booktitle = "Proceedings of the International Conference on Computer Vision Workshops (ICCVW)",

year = 2017,

}

@InProceedings{RubioICCVW2017,

author = {Antonio Rubio and Longlong Yu and Edgar Simo-Serra and Francesc Moreno-Noguer},

title = {{Multi-Modal Embedding for Main Product Detection in Fashion}},

booktitle = "Proceedings of the International Conference on Computer Vision Workshops (ICCVW)",

year = 2017,

}

@InProceedings{InoueICCVW2017,

author = {Naoto Inoue and Edgar Simo-Serra and Toshihiko Yamasaki and Hiroshi Ishikawa},

title = {{Multi-Label Fashion Image Classification with Minimal Human Supervision}},

booktitle = "Proceedings of the International Conference on Computer Vision Workshops (ICCVW)",

year = 2017,

}

@InProceedings{SimoSerraCVPR2016,

author = {Edgar Simo-Serra and Hiroshi Ishikawa},

title = {{Fashion Style in 128 Floats: Joint Ranking and Classification using Weak Data for Feature Extraction}},

booktitle = "Proceedings of the Conference on Computer Vision and Pattern Recognition (CVPR)",

year = 2016,

}

@InProceedings{SimoSerraCVPR2015,

author = {Edgar Simo-Serra and Sanja Fidler and Francesc Moreno-Noguer and Raquel Urtasun},

title = {{Neuroaesthetics in Fashion: Modeling the Perception of Fashionability}},

booktitle = "Proceedings of the Conference on Computer Vision and Pattern Recognition (CVPR)",

year = 2015,

}

@InProceedings{SimoSerraACCV2014,

author = {Edgar Simo-Serra and Sanja Fidler and Francesc Moreno-Noguer and Raquel Urtasun},

title = {{A High Performance CRF Model for Clothes Parsing}},

booktitle = "Proceedings of the Asian Conference on Computer Vision (ACCV)",

year = 2014,

}